Andrew Feldman

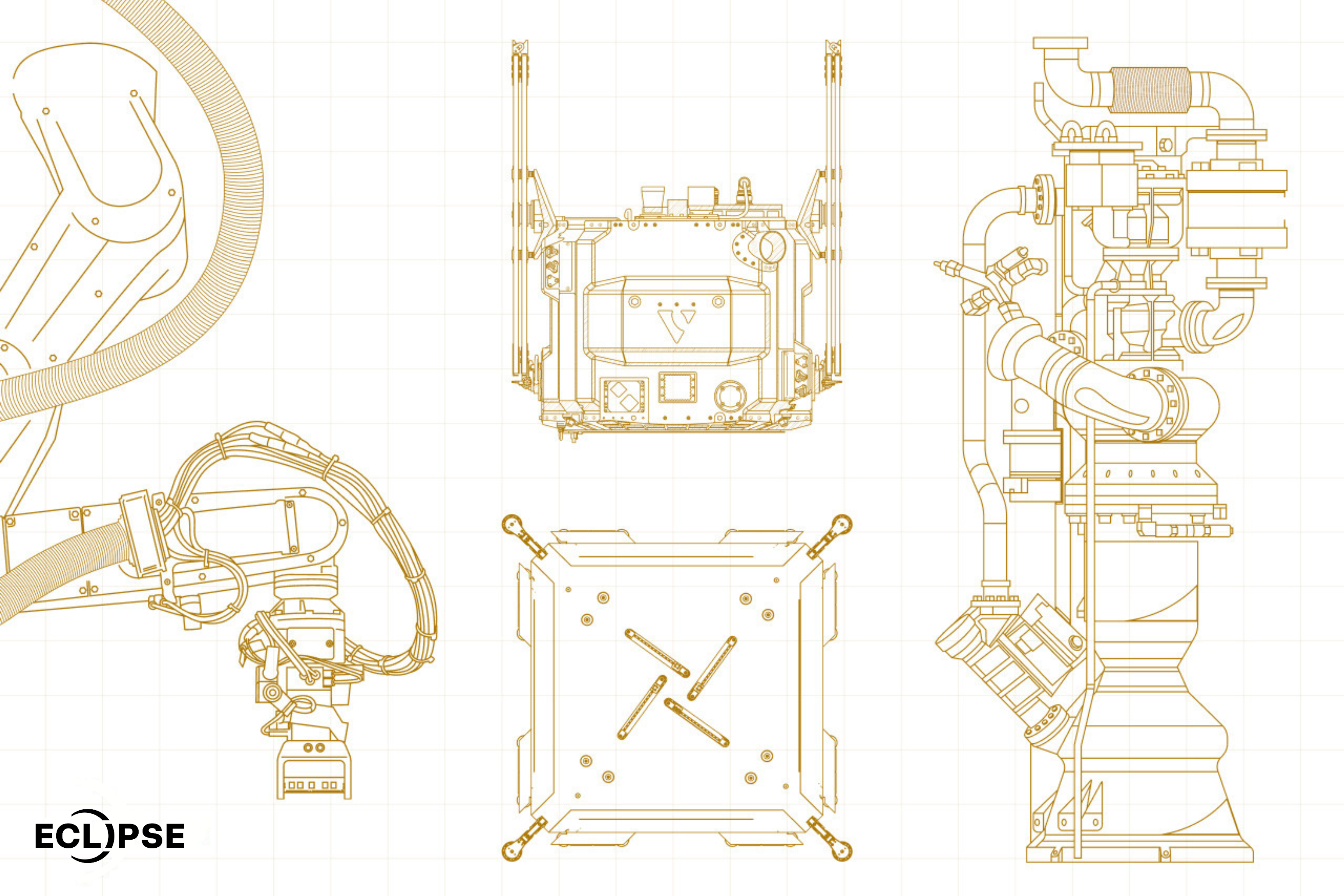

Cerebras Systems is building the world’s fastest AI infrastructure. Its wafer-scale processor — the world’s largest — brings massive compute and memory together on a single device, eliminating the bottlenecks of conventional systems.

As larger and more capable AI models spend more compute planning, checking, and refining their work, Cerebras speed translates into greater interactivity and intelligence. Across cloud, dedicated, and on-prem deployments, Cerebras powers coding at the speed of thought, agents that never stall, life-like conversational AI, and a new class automation.

The market is validating this revolutionary architecture with a steady drumbeat of adoption. In 2024 and 2025, Cerebras and strategic partner G42 established the world’s leading sovereign AI infrastructure to train and serve state-of-the-art models like Jais. On January 14, 2026, OpenAI announced a partnership with Cerebras to add 750MW of ultra-fast compute. On February 12, 2026, GPT‑5.3‑Codex‑Spark became the first public milestone in that relationship, showing how faster serving changes the experience of coding and iteration. And on March 13, 2026, AWS announced Cerebras as its first cloud provider for disaggregated inference, bringing Trainium + CS‑3 to Amazon Bedrock. Moreover, leading companies are applying Cerebras’ unmatched inference speed to build products others can’t. Cerebras is going mainstream as the foundational infrastructure for more interactive and intelligent AI.

“The Cerebras team has come together to build a new class of computer system, designed from first principles with the singular goal of accelerating artificial intelligence work to transform society.”

Co-Founder and CEO, Cerebras Systems

Andrew Feldman